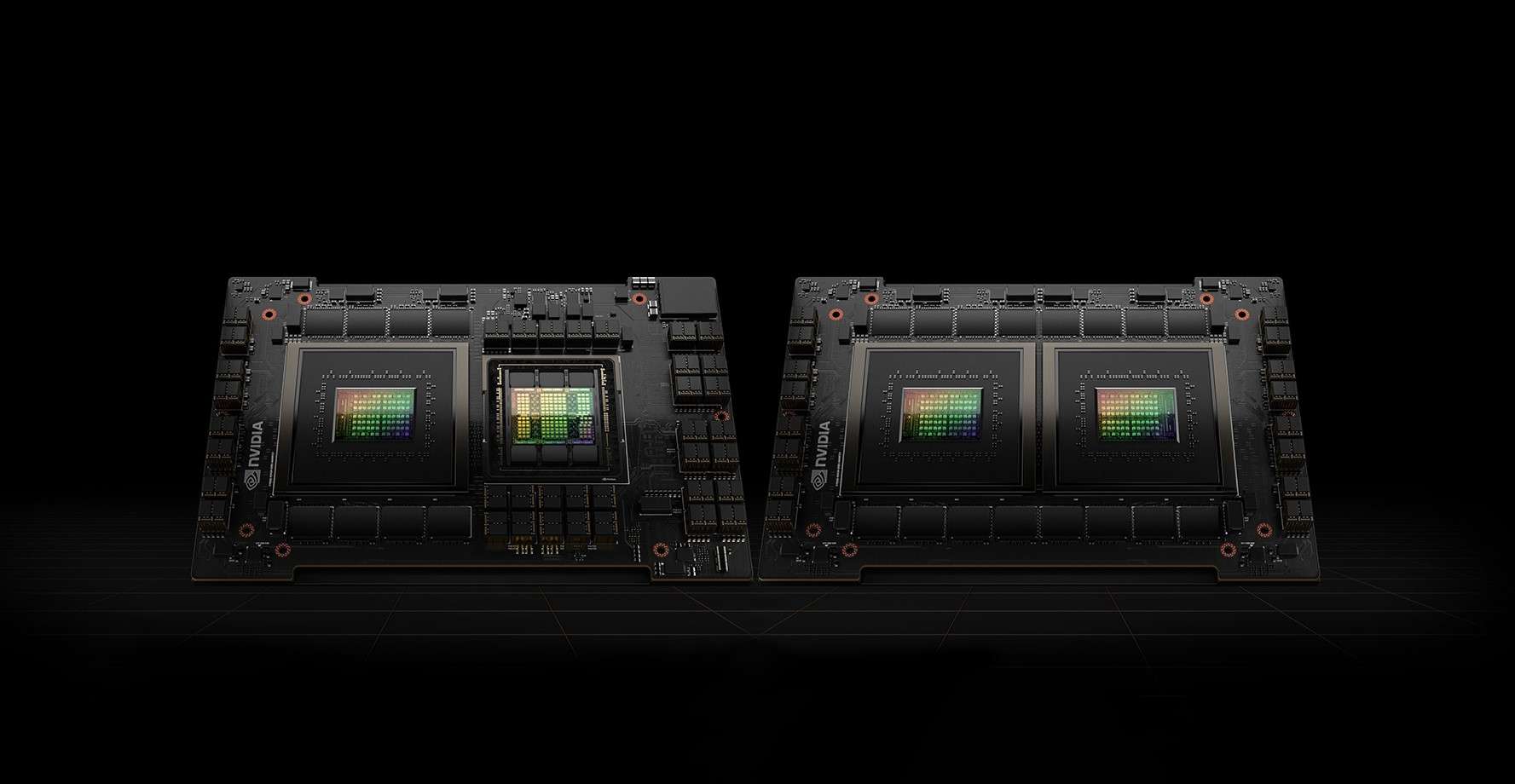

Nvidia just unveiled the DGX GH200, a next-generation supercomputer for training artificial intelligence. With the new Grace Hopper chips, you’ll achieve one exaflop’s computing power.

You will also be interested

[EN VIDÉO] Interview: How was artificial intelligence born? Artificial intelligence aims to mimic the workings of the human brain, or at least its logic…

After last week’s Intel, it’s Nvidia’s turn to announce new chips and a new supercomputer dedicated to artificial intelligence. At Computex this week in Taiwan, the manufacturer introduced the DGX GH200, a supercomputer designed to train language large models (LLM) that form the basis of generative artificial intelligence like ChatGPT.

The system will consist of a 256 GH200 “Grace Hopper” with 144 TB of shared memory, or 500 times the memory of its predecessor, the A100. For optimal operation, Nvidia has combined the Grace processor and Hopper H100 Tensor Core graphics processor into a single chip to create the new “superchip” GH200. In all, the DGX GH200 supercomputer has one computing power, or half that of the Intel Aurora supercomputer, due out this year.

The supercomputer will be available this year

The various elements of this chip are interconnected thanks to Nvidia NVLink-C2C, which multiplies the bandwidth by seven and divides the power consumption by five compared to a PCI Express connection. In addition, all of the supercomputer’s graphics processors will be able to work together as a single element, which simplifies their programming, unlike the previous generation which could only combine graphics processors in groups of eight.

Nvidia plans to release the DGX GH200 supercomputer by the end of the year. The manufacturer indicated that Google Cloud, Meta, and Microsoft should be among the first customers.

“Hardcore beer fanatic. Falls down a lot. Professional coffee fan. Music ninja.”

:format(url)/cloudfront-us-east-1.images.arcpublishing.com/lescoopsdelinformation/MJJLV2ZZGRA7NOZ7RPP6N5DJFI.jpg)

More Stories

Xbox is exploring options to revive the Fallout franchise

The seven articles you shouldn't miss this week

Psychologically: When our body is lost in space…